Latest Research: The Generational AI Divide Is Becoming a Structural Risk for Professions

There is a tendency to treat AI as a tool adoption story, something that can be tracked through usage rates and platform uptake. That framing feels increasingly inadequate when you sit with the latest data for long enough. What emerges is less about technology and more about capability, judgement, and how that capability is distributed across different parts of your profession.

The latest research from RMIT and Deloitte Access Economics “Beyond Prompting: Measuring the Generational AI Gap” suggests that we are now dealing with a generational divide that is shaping how work is done, how decisions are made, and ultimately how sustainable a profession will be over time.

The numbers themselves are familiar at first glance, but the relationships between them tell a more consequential story.

Eighty-four per cent of Australian workers are already using AI, yet only seven per cent are proficient, with more than half still fumbling at beginner level.

A workforce using AI without the experience to challenge it

The most telling detail in the report sits a layer below those headline figures. Workers are roughly twice as capable in technical execution as they are in judgement-based application.

Around 21% of workers have advanced level technical AI skills, compared with just 11% cent in judgement-based skills.

In other words, they can prompt. They can generate. They can automate. But fewer can properly evaluate what comes back, question its assumptions, or recognise when something should not be used at all.

From an association perspective, this matters because professions are built on judgement, not output. If that layer of judgement is uneven, then the quality and consistency of professional practice becomes uneven as well.

The generational divide is now shaping risk

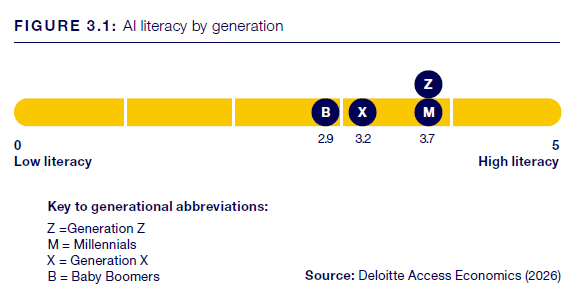

The generational differences in the data are stark. Younger professionals are more fluent with AI tools and more likely to experiment. Older professionals tend to move more cautiously and remain less engaged at a technical level.

Yet that fluency comes with a degree of overconfidence. The report notes that younger workers are significantly more likely to overrate their AI capability, which increases the likelihood of misuse or uncritical reliance on outputs

The result is not just a skills gap. It is a misalignment between capability and accountability.

The research does not explicitly state this, but it is a reasonable reading when you consider how capability is distributed.

Those most comfortable using AI are not always those accountable for its outcomes.

Those responsible for oversight are not always confident in its application

Training, productivity, and the emerging wage effect

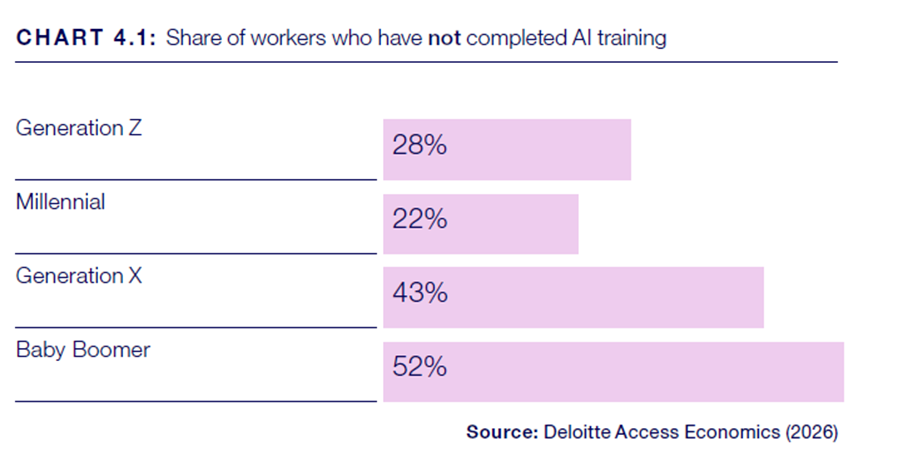

Training patterns reinforce this divide in a way that is hard to ignore.

Older workers are twice as likely to have not completed any AI training in the past year, with 52 per cent reporting no training compared with 22 per cent of millennials and 28 per cent of Gen Z. Among those who do engage, younger workers are also investing more time, averaging around 45 hours of training annually compared with 35 hours for older cohorts.

The report also makes something else clear. This is not just about skills. It is about economic outcomes.

Workers using AI effectively are reporting meaningful productivity gains, in some cases saving up to eleven hours per week. That changes how work gets done, and those gains are already translating into measurable economic value.

According to Deloitte Access Economics estimates, for the average full-time Australian worker, moving from beginner to intermediate AI capability is associated with an uplift of around $7,000 annually. Progressing from beginner to advanced capability lifts that figure to almost $11,000 in additional wages.

Capability is starting to show up in pay packets. And like capability, those gains are uneven.

The divide between large organisations and everyone else

This is where the generational divide and the structural divide start to reinforce each other. And have implications for many associations.

Large organisations are beginning to respond in a coordinated way. Many are investing heavily in enterprise-wide AI training, building internal governance frameworks, and actively managing how tools are used across their workforce. In Australia, institutions such as the Commonwealth Bank have publicly committed to upskilling their workforce at scale, recognising that capability needs to be built deliberately.

But most professionals, and consequently most association members, do not work in that environment. They work in small firms, or run their own businesses, or operate in settings where formal training, support and governance structures are limited.

And this is precisely where associations have a role that is difficult to replicate elsewhere. For members operating outside large organisations, the association may be the only place where structured, profession-specific guidance exists, where capability can be built with some consistency, and where standards can be interpreted in light of how AI is actually being used.

Without that intervention, the gap does not just persist. It widens.

This is where an AI Skills Readiness Survey becomes practical

The RMIT and Deloitte Access Economics research outlines a multi-dimensional view of AI literacy, spanning technical skills, critical evaluation, ethical awareness, and strategic application.

That framework is informative for associations, but only to a point. For associations, the value lies in translation.

A profession specific AI Skills Readiness Index survey allows that framework to be grounded in the actual work members are doing, with the actual tools they are using and within the regulatory, ethical, and commercial constraints they operate under.

It allows associations to see exactly where capability sits within their workforce, where confidence is misplaced. It also makes visible the differences that matter, including those between large organisations and smaller operators, and between different cohorts within the profession.

What this means for associations

The research has real implications for associations, as it points to a workforce that has adopted AI, but not evenly and not with consistent capability.

Some members are supported by structured training and systems. Others are working it out alone, often carrying more risk. Some are confident but not yet capable, while others remain cautious and under-engaged. Factor in the productivity gains, emerging wage premiums, and uneven access to training, and the pattern is clear.

This is not just a skills issue. It is structural. And associations sit across this divide.

They see both ends of it. The employee with support, and the sole practitioner figuring it out in real time. That spread matters, because the capability gap is already shaping how work is done and how standards hold up.

If that gap continues to widen, the role of the association moves from helpful to essential.

But it requires a shift in focus from:

Broad thought leadership to profession-specific insight

One-size-fits-all training to targeted capability building

Assumptions about member needs to measured evidence

Associations that make this shift will do more than support their members through change. They will sit at the centre of how those members adapt, stay relevant, and remain employable.

And in a market where even strong organisations are restructuring while investing in AI, that kind of relevance carries real weight.